The use of private and public encryption keys is fundamental in the implementation of which of the following?

Diffie-Hellman algorithm

Secure Sockets Layer (SSL)

Advanced Encryption Standard (AES)

Message Digest 5 (MD5)

The use of private and public encryption keys is fundamental in the implementation of Secure Sockets Layer (SSL). SSL is a protocol that provides secure communication over the Internet by using public key cryptography and digital certificates. SSL works as follows:

The use of private and public encryption keys is fundamental in the implementation of SSL because it enables the authentication of the parties, the establishment of the shared secret key, and the protection of the data from eavesdropping, tampering, and replay attacks.

The other options are not protocols or algorithms that use private and public encryption keys in their implementation. Diffie-Hellman algorithm is a method for generating a shared secret key between two parties, but it does not use private and public encryption keys, but rather public and private parameters. Advanced Encryption Standard (AES) is a symmetric encryption algorithm that uses the same key for encryption and decryption, but it does not use private and public encryption keys, but rather a single secret key. Message Digest 5 (MD5) is a hash function that produces a fixed-length output from a variable-length input, but it does not use private and public encryption keys, but rather a one-way mathematical function.

How does security in a distributed file system using mutual authentication differ from file security in a multi-user host?

Access control can rely on the Operating System (OS), but eavesdropping is

Access control cannot rely on the Operating System (OS), and eavesdropping

Access control can rely on the Operating System (OS), and eavesdropping is

Access control cannot rely on the Operating System (OS), and eavesdropping

Security in a distributed file system using mutual authentication differs from file security in a multi-user host in that access control cannot rely on the Operating System (OS), and eavesdropping is possible. A distributed file system is a system that allows users to access files stored on remote servers over a network. Mutual authentication is a process where both the client and the server verify each other’s identity before establishing a connection. In a distributed file system, access control cannot rely on the OS, because the OS may not have the same security policies or mechanisms as the remote server. Therefore, access control must be implemented at the application layer, using protocols such as Kerberos or SSL/TLS. Eavesdropping is also possible in a distributed file system, because the network traffic may be intercepted or modified by malicious parties. Therefore, encryption and integrity checks must be used to protect the data in transit. A multi-user host is a system that allows multiple users to access files stored on a local server. In a multi-user host, access control can rely on the OS, because the OS can enforce security policies and mechanisms such as permissions, groups, and roles. Eavesdropping is less likely in a multi-user host, because the network traffic is confined to the local server. References: Official (ISC)2 CISSP CBK Reference, Fifth Edition, Domain 3: Security Architecture and Engineering, p. 373-374; CISSP All-in-One Exam Guide, Eighth Edition, Chapter 3: Security Architecture and Engineering, p. 149-150.

What is the expected outcome of security awareness in support of a security awareness program?

Awareness activities should be used to focus on security concerns and respond to those concerns

accordingly

Awareness is not an activity or part of the training but rather a state of persistence to support the program

Awareness is training. The purpose of awareness presentations is to broaden attention of security.

Awareness is not training. The purpose of awareness presentation is simply to focus attention on security.

The expected outcome of security awareness in support of a security awareness program is that awareness is not training, but the purpose of awareness presentation is simply to focus attention on security. A security awareness program is a set of activities and initiatives that aim to raise the awareness and understanding of the security policies, standards, procedures, and guidelines among the employees, contractors, partners, or customers of an organization. A security awareness program can provide some benefits for security, such as improving the knowledge and the skills of the parties, changing the attitudes and the behaviors of the parties, and empowering the parties to make informed and secure decisions regarding the security activities. A security awareness program can involve various methods and techniques, such as posters, newsletters, emails, videos, quizzes, games, or rewards. Security awareness is not training, but the purpose of awareness presentation is simply to focus attention on security. Security awareness is the state or condition of being aware or conscious of the security issues and incidents, and the importance and implications of security. Security awareness is not the same as training, as it does not aim to teach or instruct the parties on how to perform specific tasks or functions related to security, but rather to inform and remind the parties of the security policies, standards, procedures, and guidelines, and their roles and responsibilities in complying and supporting them. The purpose of awareness presentation is simply to focus attention on security, as it does not provide detailed or comprehensive information or guidance on security, but rather to highlight or emphasize the key or relevant points or messages of security, and to motivate or persuade the parties to pay attention and care about security. Awareness activities should be used to focus on security concerns and respond to those concerns accordingly, awareness is not an activity or part of the training but rather a state of persistence to support the program, and awareness is training, the purpose of awareness presentations is to broaden attention of security are not the expected outcomes of security awareness in support of a security awareness program, although they may be related or possible statements. Awareness activities should be used to focus on security concerns and respond to those concerns accordingly is a statement that describes one of the possible objectives or functions of awareness activities, but it is not the expected outcome of security awareness, as it does not define or differentiate security awareness from training, and it does not specify the purpose of awareness presentation. Awareness is not an activity or part of the training but rather a state of persistence to support the program is a statement that partially defines security awareness, but it is not the expected outcome of security awareness, as it does not differentiate security awareness from training, and it does not specify the purpose of awareness presentation. Awareness is training, the purpose of awareness presentations is to broaden attention of security is a statement that contradicts the definition of security awareness, as it confuses security awareness with training, and it does not specify the purpose of awareness presentation.

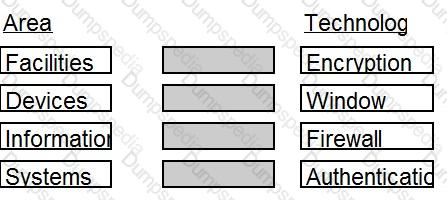

Match the name of access control model with its associated restriction.

Drag each access control model to its appropriate restriction access on the right.

Which of the following is the MOST efficient mechanism to account for all staff during a speedy nonemergency evacuation from a large security facility?

Large mantrap where groups of individuals leaving are identified using facial recognition technology

Radio Frequency Identification (RFID) sensors worn by each employee scanned by sensors at each exitdoor

Emergency exits with push bars with coordinates at each exit checking off the individual against a

predefined list

Card-activated turnstile where individuals are validated upon exit

Section: Security Operations

Which of the following is the MOST effective method to mitigate Cross-Site Scripting (XSS) attacks?

Use Software as a Service (SaaS)

Whitelist input validation

Require client certificates

Validate data output

The most effective method to mitigate Cross-Site Scripting (XSS) attacks is to use whitelist input validation. XSS attacks occur when an attacker injects malicious code, usually in the form of a script, into a web application that is then executed by the browser of an unsuspecting user. XSS attacks can compromise the confidentiality, integrity, and availability of the web application and the user’s data. Whitelist input validation is a technique that checks the user input against a predefined set of acceptable values or characters, and rejects any input that does not match the whitelist. Whitelist input validation can prevent XSS attacks by filtering out any malicious or unexpected input that may contain harmful scripts. Whitelist input validation should be applied at the point of entry of the user input, and should be combined with output encoding or sanitization to ensure that any input that is displayed back to the user is safe and harmless. Use Software as a Service (SaaS), require client certificates, and validate data output are not the most effective methods to mitigate XSS attacks, although they may be related or useful techniques. Use Software as a Service (SaaS) is a model that delivers software applications over the Internet, usually on a subscription or pay-per-use basis. SaaS can provide some benefits for web security, such as reducing the attack surface, outsourcing the maintenance and patching of the software, and leveraging the expertise and resources of the service provider. However, SaaS does not directly address the issue of XSS attacks, as the service provider may still have vulnerabilities or flaws in their web applications that can be exploited by XSS attackers. Require client certificates is a technique that uses digital certificates to authenticate the identity of the clients who access a web application. Client certificates are issued by a trusted certificate authority (CA), and contain the public key and other information of the client. Client certificates can provide some benefits for web security, such as enhancing the confidentiality and integrity of the communication, preventing unauthorized access, and enabling mutual authentication. However, client certificates do not directly address the issue of XSS attacks, as the client may still be vulnerable to XSS attacks if the web application does not properly validate and encode the user input. Validate data output is a technique that checks the data that is sent from the web application to the client browser, and ensures that it is correct, consistent, and safe. Validate data output can provide some benefits for web security, such as detecting and correcting any errors or anomalies in the data, preventing data leakage or corruption, and enhancing the quality and reliability of the web application. However, validate data output is not sufficient to prevent XSS attacks, as the data output may still contain malicious scripts that can be executed by the client browser. Validate data output should be complemented with output encoding or sanitization to ensure that any data output that is displayed to the user is safe and harmless.

Match the functional roles in an external audit to their responsibilities.

Drag each role on the left to its corresponding responsibility on the right.

Select and Place:

The correct matching of the functional roles and their responsibilities in an external audit is:

Comprehensive Explanation: An external audit is an independent and objective examination of an organization’s financial statements, systems, processes, or performance by an external party. The functional roles and their responsibilities in an external audit are:

References: CISSP All-in-One Exam Guide

Which factors MUST be considered when classifying information and supporting assets for risk management, legal discovery, and compliance?

System owner roles and responsibilities, data handling standards, storage and secure development lifecycle requirements

Data stewardship roles, data handling and storage standards, data lifecycle requirements

Compliance office roles and responsibilities, classified material handling standards, storage system lifecycle requirements

System authorization roles and responsibilities, cloud computing standards, lifecycle requirements

The factors that must be considered when classifying information and supporting assets for risk management, legal discovery, and compliance are data stewardship roles, data handling and storage standards, and data lifecycle requirements. Data stewardship roles are the roles and responsibilities of the individuals or entities who are accountable for the creation, maintenance, protection, and disposal of the information and supporting assets. Data stewardship roles include data owners, data custodians, data users, and data stewards. Data handling and storage standards are the policies, procedures, and guidelines that define how the information and supporting assets should be handled and stored, based on their classification level, sensitivity, and value. Data handling and storage standards include data labeling, data encryption, data backup, data retention, and data disposal. Data lifecycle requirements are the requirements that specify the stages and processes that the information and supporting assets should go through, from their creation to their destruction. Data lifecycle requirements include data collection, data processing, data analysis, data sharing, data archiving, and data deletion. System owner roles and responsibilities, data handling standards, storage and secure development lifecycle requirements are not the factors that must be considered when classifying information and supporting assets for risk management, legal discovery, and compliance, although they may be related or relevant concepts. System owner roles and responsibilities are the roles and responsibilities of the individuals or entities who are accountable for the operation, performance, and security of the system that hosts or processes the information and supporting assets. System owner roles and responsibilities include system authorization, system configuration, system monitoring, and system maintenance. Data handling standards are the policies, procedures, and guidelines that define how the information should be handled, but not how the supporting assets should be stored. Data handling standards are a subset of data handling and storage standards. Storage and secure development lifecycle requirements are the requirements that specify the stages and processes that the storage and development systems should go through, from their inception to their decommissioning. Storage and secure development lifecycle requirements include storage design, storage implementation, storage testing, storage deployment, storage operation, storage maintenance, and storage disposal. Compliance office roles and responsibilities, classified material handling standards, storage system lifecycle requirements are not the factors that must be considered when classifying information and supporting assets for risk management, legal discovery, and compliance, although they may be related or relevant concepts. Compliance office roles and responsibilities are the roles and responsibilities of the individuals or entities who are accountable for ensuring that the organization complies with the applicable laws, regulations, standards, and policies. Compliance office roles and responsibilities include compliance planning, compliance assessment, compliance reporting, and compliance improvement. Classified material handling standards are the policies, procedures, and guidelines that define how the information and supporting assets that are classified by the government or military should be handled and stored, based on their security level, such as top secret, secret, or confidential. Classified material handling standards are a subset of data handling and storage standards. Storage system lifecycle requirements are the requirements that specify the stages and processes that the storage system should go through, from its inception to its decommissioning. Storage system lifecycle requirements are a subset of storage and secure development lifecycle requirements. System authorization roles and responsibilities, cloud computing standards, lifecycle requirements are not the factors that must be considered when classifying information and supporting assets for risk management, legal discovery, and compliance, although they may be related or relevant concepts. System authorization roles and responsibilities are the roles and responsibilities of the individuals or entities who are accountable for granting or denying access to the system that hosts or processes the information and supporting assets. System authorization roles and responsibilities include system identification, system authentication, system authorization, and system auditing. Cloud computing standards are the standards that define the requirements, specifications, and best practices for the delivery of computing services over the internet, such as infrastructure as a service (IaaS), platform as a service (PaaS), or software as a service (SaaS). Cloud computing standards include cloud service level agreements (SLAs), cloud interoperability, cloud portability, and cloud security. Lifecycle requirements are the requirements that specify the stages and processes that the information and supporting assets should go through, from their creation to their destruction. Lifecycle requirements are the same as data lifecycle requirements.

Which of the following would MINIMIZE the ability of an attacker to exploit a buffer overflow?

Memory review

Code review

Message division

Buffer division

Code review is the technique that would minimize the ability of an attacker to exploit a buffer overflow. A buffer overflow is a type of vulnerability that occurs when a program writes more data to a buffer than it can hold, causing the data to overwrite the adjacent memory locations, such as the return address or the stack pointer. An attacker can exploit a buffer overflow by injecting malicious code or data into the buffer, and altering the execution flow of the program to execute the malicious code or data. Code review is the technique that would minimize the ability of an attacker to exploit a buffer overflow, as it involves examining the source code of the program to identify and fix any errors, flaws, or weaknesses that may lead to buffer overflow vulnerabilities. Code review can help to detect and prevent the use of unsafe or risky functions, such as gets, strcpy, or sprintf, that do not perform any boundary checking on the buffer, and replace them with safer or more secure alternatives, such as fgets, strncpy, or snprintf, that limit the amount of data that can be written to the buffer. Code review can also help to enforce and verify the use of secure coding practices and standards, such as input validation, output encoding, error handling, or memory management, that can reduce the likelihood or impact of buffer overflow vulnerabilities. Memory review, message division, and buffer division are not techniques that would minimize the ability of an attacker to exploit a buffer overflow, although they may be related or useful concepts. Memory review is not a technique, but a process of analyzing the memory layout or content of a program, such as the stack, the heap, or the registers, to understand or debug its behavior or performance. Memory review may help to identify or investigate the occurrence or effect of a buffer overflow, but it does not prevent or mitigate it. Message division is not a technique, but a concept of splitting a message into smaller or fixed-size segments or blocks, such as in cryptography or networking. Message division may help to improve the security or efficiency of the message transmission or processing, but it does not prevent or mitigate buffer overflow. Buffer division is not a technique, but a concept of dividing a buffer into smaller or separate buffers, such as in buffering or caching. Buffer division may help to optimize the memory usage or allocation of the program, but it does not prevent or mitigate buffer overflow.

Who in the organization is accountable for classification of data information assets?

Data owner

Data architect

Chief Information Security Officer (CISO)

Chief Information Officer (CIO)

The person in the organization who is accountable for the classification of data information assets is the data owner. The data owner is the person or entity that has the authority and responsibility for the creation, collection, processing, and disposal of a set of data. The data owner is also responsible for defining the purpose, value, and classification of the data, as well as the security requirements and controls for the data. The data owner should be able to determine the impact of the data on the mission of the organization, which means assessing the potential consequences of losing, compromising, or disclosing the data. The impact of the data on the mission of the organization is one of the main criteria for data classification, which helps to establish the appropriate level of protection and handling for the data. The data owner should also ensure that the data is properly labeled, stored, accessed, shared, and destroyed according to the data classification policy and procedures.

The other options are not the persons in the organization who are accountable for the classification of data information assets, but rather persons who have other roles or functions related to data management. The data architect is the person or entity that designs and models the structure, format, and relationships of the data, as well as the data standards, specifications, and lifecycle. The data architect supports the data owner by providing technical guidance and expertise on the data architecture and quality. The Chief Information Security Officer (CISO) is the person or entity that oversees the security strategy, policies, and programs of the organization, as well as the security performance and incidents. The CISO supports the data owner by providing security leadership and governance, as well as ensuring the compliance and alignment of the data security with the organizational objectives and regulations. The Chief Information Officer (CIO) is the person or entity that manages the information technology (IT) resources and services of the organization, as well as the IT strategy and innovation. The CIO supports the data owner by providing IT management and direction, as well as ensuring the availability, reliability, and scalability of the IT infrastructure and applications.

The configuration management and control task of the certification and accreditation process is incorporated in which phase of the System Development Life Cycle (SDLC)?

System acquisition and development

System operations and maintenance

System initiation

System implementation

The configuration management and control task of the certification and accreditation process is incorporated in the system acquisition and development phase of the System Development Life Cycle (SDLC). The SDLC is a process that involves planning, designing, developing, testing, deploying, operating, and maintaining a system, using various models and methodologies, such as waterfall, spiral, agile, or DevSecOps. The SDLC can be divided into several phases, each with its own objectives and activities, such as:

The certification and accreditation process is a process that involves assessing and verifying the security and compliance of a system, and authorizing and approving the system operation and maintenance, using various standards and frameworks, such as NIST SP 800-37 or ISO/IEC 27001. The certification and accreditation process can be divided into several tasks, each with its own objectives and activities, such as:

The configuration management and control task of the certification and accreditation process is incorporated in the system acquisition and development phase of the SDLC, because it can ensure that the system design and development are consistent and compliant with the security objectives and requirements, and that the system changes are controlled and documented. Configuration management and control is a process that involves establishing and maintaining the baseline and the inventory of the system components and resources, such as hardware, software, data, or documentation, and tracking and recording any modifications or updates to the system components and resources, using various techniques and tools, such as version control, change control, or configuration audits. Configuration management and control can provide several benefits, such as:

The other options are not the phases of the SDLC that incorporate the configuration management and control task of the certification and accreditation process, but rather phases that involve other tasks of the certification and accreditation process. System operations and maintenance is a phase of the SDLC that incorporates the security monitoring task of the certification and accreditation process, because it can ensure that the system operation and maintenance are consistent and compliant with the security objectives and requirements, and that the system security is updated and improved. System initiation is a phase of the SDLC that incorporates the security categorization and security planning tasks of the certification and accreditation process, because it can ensure that the system scope and objectives are defined and aligned with the security objectives and requirements, and that the security plan and policy are developed and documented. System implementation is a phase of the SDLC that incorporates the security assessment and security authorization tasks of the certification and accreditation process, because it can ensure that the system deployment and installation are evaluated and verified for the security effectiveness and compliance, and that the system operation and maintenance are authorized and approved based on the risk and impact analysis and the security objectives and requirements.

Refer to the information below to answer the question.

During the investigation of a security incident, it is determined that an unauthorized individual accessed a system which hosts a database containing financial information.

If it is discovered that large quantities of information have been copied by the unauthorized individual, what attribute of the data has been compromised?

Availability

Integrity

Accountability

Confidentiality

The attribute of the data that has been compromised, if it is discovered that large quantities of information have been copied by the unauthorized individual, is the confidentiality. The confidentiality is the property or the characteristic of the data that ensures that the data is only accessible or disclosed to the authorized individuals or entities, and that the data is protected from the unauthorized or the malicious access or disclosure. The confidentiality of the data can be compromised when the data is copied, stolen, leaked, or exposed by an unauthorized individual or a malicious actor, such as the one who accessed the system hosting the database. The compromise of the confidentiality of the data can violate the privacy, the rights, or the interests of the data owners, subjects, or users, and can cause damage or harm to the organization’s operations, reputation, or objectives. Availability, integrity, and accountability are not the attributes of the data that have been compromised, if it is discovered that large quantities of information have been copied by the unauthorized individual, as they are related to the accessibility, the accuracy, or the responsibility of the data, not the secrecy or the protection of the data. References: CISSP All-in-One Exam Guide, Eighth Edition, Chapter 3, Security Architecture and Engineering, page 263. Official (ISC)2 CISSP CBK Reference, Fifth Edition, Chapter 3, Security Architecture and Engineering, page 279.

A minimal implementation of endpoint security includes which of the following?

Trusted platforms

Host-based firewalls

Token-based authentication

Wireless Access Points (AP)

A minimal implementation of endpoint security includes host-based firewalls. Endpoint security is the practice of protecting the devices that connect to a network, such as laptops, smartphones, tablets, or servers, from malicious attacks or unauthorized access. Endpoint security can involve various technologies and techniques, such as antivirus, encryption, authentication, patch management, or device control. Host-based firewalls are one of the basic and essential components of endpoint security, as they provide network-level protection for the individual devices. Host-based firewalls are software applications that monitor and filter the incoming and outgoing network traffic on a device, based on a set of rules or policies. Host-based firewalls can prevent or mitigate some types of attacks, such as denial-of-service, port scanning, or unauthorized connections, by blocking or allowing the packets that match or violate the firewall rules. Host-based firewalls can also provide some benefits for endpoint security, such as enhancing the visibility and the auditability of the network activities, enforcing the compliance and the consistency of the firewall policies, and reducing the reliance and the burden on the network-based firewalls. Trusted platforms, token-based authentication, and wireless access points (AP) are not the components that are included in a minimal implementation of endpoint security, although they may be related or useful technologies. Trusted platforms are hardware or software components that provide a secure and trustworthy environment for the execution of applications or processes on a device. Trusted platforms can involve various mechanisms, such as trusted platform modules (TPM), secure boot, or trusted execution technology (TXT). Trusted platforms can provide some benefits for endpoint security, such as enhancing the confidentiality and integrity of the data and the code, preventing unauthorized modifications or tampering, and enabling remote attestation or verification. However, trusted platforms are not a minimal or essential component of endpoint security, as they are not widely available or supported on all types of devices, and they may not be compatible or interoperable with some applications or processes. Token-based authentication is a technique that uses a physical or logical device, such as a smart card, a one-time password generator, or a mobile app, to generate or store a credential that is used to verify the identity of the user who accesses a network or a system. Token-based authentication can provide some benefits for endpoint security, such as enhancing the security and reliability of the authentication process, preventing password theft or reuse, and enabling multi-factor authentication (MFA). However, token-based authentication is not a minimal or essential component of endpoint security, as it does not provide protection for the device itself, but only for the user access credentials, and it may require additional infrastructure or support to implement and manage. Wireless access points (AP) are hardware devices that allow wireless devices, such as laptops, smartphones, or tablets, to connect to a wired network, such as the Internet or a local area network (LAN). Wireless access points (AP) can provide some benefits for endpoint security, such as extending the network coverage and accessibility, supporting the encryption and authentication mechanisms, and enabling the segmentation and isolation of the wireless network. However, wireless access points (AP) are not a component of endpoint security, as they are not installed or configured on the individual devices, but on the network infrastructure, and they may introduce some security risks, such as signal interception, rogue access points, or unauthorized connections.

Which of the following mobile code security models relies only on trust?

Code signing

Class authentication

Sandboxing

Type safety

Code signing is the mobile code security model that relies only on trust. Mobile code is a type of software that can be transferred from one system to another and executed without installation or compilation. Mobile code can be used for various purposes, such as web applications, applets, scripts, macros, etc. Mobile code can also pose various security risks, such as malicious code, unauthorized access, data leakage, etc. Mobile code security models are the techniques that are used to protect the systems and users from the threats of mobile code. Code signing is a mobile code security model that relies only on trust, which means that the security of the mobile code depends on the reputation and credibility of the code provider. Code signing works as follows:

Code signing relies only on trust because it does not enforce any security restrictions or controls on the mobile code, but rather leaves the decision to the code consumer. Code signing also does not guarantee the quality or functionality of the mobile code, but rather the authenticity and integrity of the code provider. Code signing can be effective if the code consumer knows and trusts the code provider, and if the code provider follows the security standards and best practices. However, code signing can also be ineffective if the code consumer is unaware or careless of the code provider, or if the code provider is compromised or malicious.

The other options are not mobile code security models that rely only on trust, but rather on other techniques that limit or isolate the mobile code. Class authentication is a mobile code security model that verifies the permissions and capabilities of the mobile code based on its class or type, and allows or denies the execution of the mobile code accordingly. Sandboxing is a mobile code security model that executes the mobile code in a separate and restricted environment, and prevents the mobile code from accessing or affecting the system resources or data. Type safety is a mobile code security model that checks the validity and consistency of the mobile code, and prevents the mobile code from performing illegal or unsafe operations.

Which of the following MUST be scalable to address security concerns raised by the integration of third-party

identity services?

Mandatory Access Controls (MAC)

Enterprise security architecture

Enterprise security procedures

Role Based Access Controls (RBAC)

Enterprise security architecture is the framework that defines the security policies, standards, guidelines, and controls that govern the security of an organization’s information systems and assets. Enterprise security architecture must be scalable to address the security concerns raised by the integration of third-party identity services, such as Identity as a Service (IDaaS) or federated identity management. Scalability means that the enterprise security architecture can accommodate the increased complexity, diversity, and volume of identity and access management transactions and interactions that result from the integration of external identity providers and consumers. Scalability also means that the enterprise security architecture can adapt to the changing security requirements and threats that may arise from the integration of third-party identity services.

Which of the following is MOST appropriate to collect evidence of a zero-day attack?

Firewall

Honeypot

Antispam

Antivirus

A honeypot is a decoy system that is designed to attract and trap attackers. A honeypot can be used to collect evidence of a zero-day attack, which is an attack that exploits a previously unknown vulnerability. A honeypot can capture the attacker’s actions, tools, and techniques, and provide valuable information for analysis and mitigation. A honeypot can also divert the attacker’s attention from the real targets and waste their time and resources. A firewall, an antispam, and an antivirus are not effective in detecting or preventing zero-day attacks, as they rely on known signatures or rules that may not match the new attack. References: CISSP Official Study Guide, 9th Edition, page 1010; CISSP All-in-One Exam Guide, 8th Edition, page 1089

Which of the following assures that rules are followed in an identity management architecture?

Policy database

Digital signature

Policy decision point

Policy enforcement point

The component that assures that rules are followed in an identity management architecture is the policy enforcement point. A policy enforcement point is a device or software that implements and enforces the security policies and rules defined by the policy decision point. A policy decision point is a device or software that evaluates and makes decisions about the access requests and privileges of the users or devices based on the security policies and rules. A policy enforcement point can be a firewall, a router, a switch, a proxy, or an application that controls the access to the network or system resources. A policy database, a digital signature, and a policy decision point are not the components that assure that rules are followed in an identity management architecture, as they are related to the storage, verification, or definition of the security policies and rules, not the implementation or enforcement of them. References: CISSP All-in-One Exam Guide, Eighth Edition, Chapter 5, Identity and Access Management, page 664. Official (ISC)2 CISSP CBK Reference, Fifth Edition, Chapter 5, Identity and Access Management, page 680.

Which security service is served by the process of encryption plaintext with the sender’s private key and decrypting cipher text with the sender’s public key?

Confidentiality

Integrity

Identification

Availability

The security service that is served by the process of encrypting plaintext with the sender’s private key and decrypting ciphertext with the sender’s public key is identification. Identification is the process of verifying the identity of a person or entity that claims to be who or what it is. Identification can be achieved by using public key cryptography and digital signatures, which are based on the process of encrypting plaintext with the sender’s private key and decrypting ciphertext with the sender’s public key. This process works as follows:

The process of encrypting plaintext with the sender’s private key and decrypting ciphertext with the sender’s public key serves identification because it ensures that only the sender can produce a valid ciphertext that can be decrypted by the receiver, and that the receiver can verify the sender’s identity by using the sender’s public key. This process also provides non-repudiation, which means that the sender cannot deny sending the message or the receiver cannot deny receiving the message, as the ciphertext serves as a proof of origin and delivery.

The other options are not the security services that are served by the process of encrypting plaintext with the sender’s private key and decrypting ciphertext with the sender’s public key. Confidentiality is the process of ensuring that the message is only readable by the intended parties, and it is achieved by encrypting plaintext with the receiver’s public key and decrypting ciphertext with the receiver’s private key. Integrity is the process of ensuring that the message is not modified or corrupted during transmission, and it is achieved by using hash functions and message authentication codes. Availability is the process of ensuring that the message is accessible and usable by the authorized parties, and it is achieved by using redundancy, backup, and recovery mechanisms.

What is the second phase of Public Key Infrastructure (PKI) key/certificate life-cycle management?

Implementation Phase

Initialization Phase

Cancellation Phase

Issued Phase

The second phase of Public Key Infrastructure (PKI) key/certificate life-cycle management is the initialization phase. PKI is a system that uses public key cryptography and digital certificates to provide authentication, confidentiality, integrity, and non-repudiation for electronic transactions. PKI key/certificate life-cycle management is the process of managing the creation, distribution, usage, storage, revocation, and expiration of keys and certificates in a PKI system. The key/certificate life-cycle management consists of six phases: pre-certification, initialization, certification, operational, suspension, and termination. The initialization phase is the second phase, where the key pair and the certificate request are generated by the end entity or the registration authority (RA). The initialization phase involves the following steps:

The other options are not the second phase of PKI key/certificate life-cycle management, but rather other phases. The implementation phase is not a phase of PKI key/certificate life-cycle management, but rather a phase of PKI system deployment, where the PKI components and policies are installed and configured. The cancellation phase is not a phase of PKI key/certificate life-cycle management, but rather a possible outcome of the termination phase, where the key pair and the certificate are permanently revoked and deleted. The issued phase is not a phase of PKI key/certificate life-cycle management, but rather a possible outcome of the certification phase, where the CA verifies and approves the certificate request and issues the certificate to the end entity or the RA.

What is the BEST location in a network to place Virtual Private Network (VPN) devices when an internal review reveals network design flaws in remote access?

In a dedicated Demilitarized Zone (DMZ)

In its own separate Virtual Local Area Network (VLAN)

At the Internet Service Provider (ISP)

Outside the external firewall

The best location in a network to place Virtual Private Network (VPN) devices when an internal review reveals network design flaws in remote access is in a dedicated Demilitarized Zone (DMZ). A DMZ is a network segment that is located between the internal network and the external network, such as the internet. A DMZ is used to host the services or devices that need to be accessed by both the internal and external users, such as web servers, email servers, or VPN devices. A VPN device is a device that enables the establishment of a VPN, which is a secure and encrypted connection between two networks or endpoints over a public network, such as the internet. Placing the VPN devices in a dedicated DMZ can help to improve the security and performance of the remote access, as well as to isolate the VPN devices from the internal network and the external network. Placing the VPN devices in its own separate VLAN, at the ISP, or outside the external firewall are not the best locations, as they may expose the VPN devices to more risks, reduce the control over the VPN devices, or create a single point of failure for the remote access. References: CISSP All-in-One Exam Guide, Eighth Edition, Chapter 6: Communication and Network Security, page 729; Official (ISC)2 Guide to the CISSP CBK, Fifth Edition, Chapter 4: Communication and Network Security, page 509.

Which technique can be used to make an encryption scheme more resistant to a known plaintext attack?

Hashing the data before encryption

Hashing the data after encryption

Compressing the data after encryption

Compressing the data before encryption

Compressing the data before encryption is a technique that can be used to make an encryption scheme more resistant to a known plaintext attack. A known plaintext attack is a type of cryptanalysis where the attacker has access to some pairs of plaintext and ciphertext encrypted with the same key, and tries to recover the key or decrypt other ciphertexts. A known plaintext attack can exploit the statistical properties or patterns of the plaintext or the ciphertext to reduce the search space or guess the key. Compressing the data before encryption can reduce the redundancy and increase the entropy of the plaintext, making it harder for the attacker to find any correlations or similarities between the plaintext and the ciphertext. Compressing the data before encryption can also reduce the size of the plaintext, making it more difficult for the attacker to obtain enough plaintext-ciphertext pairs for a successful attack.

The other options are not techniques that can be used to make an encryption scheme more resistant to a known plaintext attack, but rather techniques that can introduce other security issues or inefficiencies. Hashing the data before encryption is not a useful technique, as hashing is a one-way function that cannot be reversed, and the encrypted hash cannot be decrypted to recover the original data. Hashing the data after encryption is also not a useful technique, as hashing does not add any security to the encryption, and the hash can be easily computed by anyone who has access to the ciphertext. Compressing the data after encryption is not a recommended technique, as compression algorithms usually work better on uncompressed data, and compressing the ciphertext can introduce errors or vulnerabilities that can compromise the encryption.

What is the BEST approach to addressing security issues in legacy web applications?

Debug the security issues

Migrate to newer, supported applications where possible

Conduct a security assessment

Protect the legacy application with a web application firewall

Migrating to newer, supported applications where possible is the best approach to addressing security issues in legacy web applications. Legacy web applications are web applications that are outdated, unsupported, or incompatible with the current technologies and standards. Legacy web applications may have various security issues, such as:

Migrating to newer, supported applications where possible is the best approach to addressing security issues in legacy web applications, because it can provide several benefits, such as:

The other options are not the best approaches to addressing security issues in legacy web applications, but rather approaches that can mitigate or remediate the security issues, but not eliminate or prevent them. Debugging the security issues is an approach that can mitigate the security issues in legacy web applications, but not the best approach, because it involves identifying and fixing the errors or defects in the code or logic of the web applications, which may be difficult or impossible to do for the legacy web applications that are outdated or unsupported. Conducting a security assessment is an approach that can remediate the security issues in legacy web applications, but not the best approach, because it involves evaluating and testing the security effectiveness and compliance of the web applications, using various techniques and tools, such as audits, reviews, scans, or penetration tests, and identifying and reporting any security weaknesses or gaps, which may not be sufficient or feasible to do for the legacy web applications that are incompatible or obsolete. Protecting the legacy application with a web application firewall is an approach that can mitigate the security issues in legacy web applications, but not the best approach, because it involves deploying and configuring a web application firewall, which is a security device or software that monitors and filters the web traffic between the web applications and the users or clients, and blocks or allows the web requests or responses based on the predefined rules or policies, which may not be effective or efficient to do for the legacy web applications that have weak or outdated encryption or authentication mechanisms.

Which security access policy contains fixed security attributes that are used by the system to determine a

user’s access to a file or object?

Mandatory Access Control (MAC)

Access Control List (ACL)

Discretionary Access Control (DAC)

Authorized user control

The security access policy that contains fixed security attributes that are used by the system to determine a user’s access to a file or object is Mandatory Access Control (MAC). MAC is a type of access control model that assigns permissions to users and objects based on their security labels, which indicate their level of sensitivity or trustworthiness. MAC is enforced by the system or the network, rather than by the owner or the creator of the object, and it cannot be modified or overridden by the users. MAC can provide some benefits for security, such as enhancing the confidentiality and the integrity of the data, preventing unauthorized access or disclosure, and supporting the audit and compliance activities. MAC is commonly used in military or government environments, where the data is classified according to its level of sensitivity, such as top secret, secret, confidential, or unclassified. The users are granted security clearance based on their level of trustworthiness, such as their background, their role, or their need to know. The users can only access the objects that have the same or lower security classification than their security clearance, and the objects can only be accessed by the users that have the same or higher security clearance than their security classification. This is based on the concept of no read up and no write down, which requires that a user can only read data of lower or equal sensitivity level, and can only write data of higher or equal sensitivity level. MAC contains fixed security attributes that are used by the system to determine a user’s access to a file or object, by using the following methods:

Which of the following System and Organization Controls (SOC) report types should an organization request if they require a period of time report covering security and availability for a particular system?

SOC 1 Type1

SOC 1Type2

SOC 2 Type 1

SOC 2 Type 2

An organization should request a SOC 2 Type 2 report if they require a period of time report covering security and availability for a particular system. A SOC 2 report is a type of System and Organization Controls (SOC) report that evaluates the controls of a service organization based on the Trust Services Criteria (TSC), which are security, availability, processing integrity, confidentiality, and privacy. A SOC 2 report can be either Type 1 or Type 2. A SOC 2 Type 1 report describes the design and implementation of the controls at a point in time, while a SOC 2 Type 2 report tests the operating effectiveness of the controls over a period of time, usually six or twelve months. A SOC 2 Type 2 report provides more assurance and credibility than a SOC 2 Type 1 report, as it demonstrates how well the controls performed over time. A SOC 2 report can be customized to include only the relevant TSC for a particular system or service. If an organization requires a report covering security and availability, they should request a SOC 2 report that includes only those two TSC. References:

Refer to the information below to answer the question.

A large, multinational organization has decided to outsource a portion of their Information Technology (IT) organization to a third-party provider’s facility. This provider will be responsible for the design, development, testing, and support of several critical, customer-based applications used by the organization.

The third party needs to have

processes that are identical to that of the organization doing the outsourcing.

access to the original personnel that were on staff at the organization.

the ability to maintain all of the applications in languages they are familiar with.

access to the skill sets consistent with the programming languages used by the organization.

The third party needs to have access to the skill sets consistent with the programming languages used by the organization. The programming languages are the tools or the methods of creating, modifying, testing, and supporting the software applications that perform the functions or the tasks required by the organization. The programming languages can vary in their syntax, semantics, features, or paradigms, and they can require different levels of expertise or experience to use them effectively or efficiently. The third party needs to have access to the skill sets consistent with the programming languages used by the organization, as it can ensure the quality, the compatibility, and the maintainability of the software applications that the third party is responsible for. The third party does not need to have processes that are identical to that of the organization doing the outsourcing, access to the original personnel that were on staff at the organization, or the ability to maintain all of the applications in languages they are familiar with, as they are related to the methods, the resources, or the preferences of the software development, not the skill sets consistent with the programming languages used by the organization. References: CISSP All-in-One Exam Guide, Eighth Edition, Chapter 8, Software Development Security, page 1000. Official (ISC)2 CISSP CBK Reference, Fifth Edition, Chapter 8, Software Development Security, page 1016.

When in the Software Development Life Cycle (SDLC) MUST software security functional requirements be defined?

After the system preliminary design has been developed and the data security categorization has been performed

After the vulnerability analysis has been performed and before the system detailed design begins

After the system preliminary design has been developed and before the data security categorization begins

After the business functional analysis and the data security categorization have been performed

Software security functional requirements must be defined after the business functional analysis and the data security categorization have been performed in the Software Development Life Cycle (SDLC). The SDLC is a process that involves planning, designing, developing, testing, deploying, operating, and maintaining a system, using various models and methodologies, such as waterfall, spiral, agile, or DevSecOps. The SDLC can be divided into several phases, each with its own objectives and activities, such as:

Software security functional requirements are the specific and measurable security features and capabilities that the system must provide to meet the security objectives and requirements. Software security functional requirements are derived from the business functional analysis and the data security categorization, which are two tasks that are performed in the system initiation phase of the SDLC. The business functional analysis is the process of identifying and documenting the business functions and processes that the system must support and enable, such as the inputs, outputs, workflows, and tasks. The data security categorization is the process of determining the security level and impact of the system and its data, based on the confidentiality, integrity, and availability criteria, and applying the appropriate security controls and measures. Software security functional requirements must be defined after the business functional analysis and the data security categorization have been performed, because they can ensure that the system design and development are consistent and compliant with the security objectives and requirements, and that the system security is aligned and integrated with the business functions and processes.

The other options are not the phases of the SDLC when the software security functional requirements must be defined, but rather phases that involve other tasks or activities related to the system design and development. After the system preliminary design has been developed and the data security categorization has been performed is not the phase when the software security functional requirements must be defined, but rather the phase when the system architecture and components are designed, based on the system scope and objectives, and the data security categorization is verified and validated. After the vulnerability analysis has been performed and before the system detailed design begins is not the phase when the software security functional requirements must be defined, but rather the phase when the system design and components are evaluated and tested for the security effectiveness and compliance, and the system detailed design is developed, based on the system architecture and components. After the system preliminary design has been developed and before the data security categorization begins is not the phase when the software security functional requirements must be defined, but rather the phase when the system architecture and components are designed, based on the system scope and objectives, and the data security categorization is initiated and planned.

In which of the following programs is it MOST important to include the collection of security process data?

Quarterly access reviews

Security continuous monitoring

Business continuity testing

Annual security training

Security continuous monitoring is the program in which it is most important to include the collection of security process data. Security process data is the data that reflects the performance, effectiveness, and compliance of the security processes, such as the security policies, standards, procedures, and guidelines. Security process data can include metrics, indicators, logs, reports, and assessments. Security process data can provide several benefits, such as:

Security continuous monitoring is the program in which it is most important to include the collection of security process data, because it is the program that involves maintaining the ongoing awareness of the security status, events, and activities of the system. Security continuous monitoring can enable the system to detect and respond to any security issues or incidents in a timely and effective manner, and to adjust and improve the security controls and processes accordingly. Security continuous monitoring can also help the system to comply with the security requirements and standards from the internal or external authorities or frameworks.

The other options are not the programs in which it is most important to include the collection of security process data, but rather programs that have other objectives or scopes. Quarterly access reviews are programs that involve reviewing and verifying the user accounts and access rights on a quarterly basis. Quarterly access reviews can ensure that the user accounts and access rights are valid, authorized, and up to date, and that any inactive, expired, or unauthorized accounts or rights are removed or revoked. However, quarterly access reviews are not the programs in which it is most important to include the collection of security process data, because they are not focused on the security status, events, and activities of the system, but rather on the user accounts and access rights. Business continuity testing is a program that involves testing and validating the business continuity plan (BCP) and the disaster recovery plan (DRP) of the system. Business continuity testing can ensure that the system can continue or resume its critical functions and operations in case of a disruption or disaster, and that the system can meet the recovery objectives and requirements. However, business continuity testing is not the program in which it is most important to include the collection of security process data, because it is not focused on the security status, events, and activities of the system, but rather on the continuity and recovery of the system. Annual security training is a program that involves providing and updating the security knowledge and skills of the system users and staff on an annual basis. Annual security training can increase the security awareness and competence of the system users and staff, and reduce the human errors or risks that might compromise the system security. However, annual security training is not the program in which it is most important to include the collection of security process data, because it is not focused on the security status, events, and activities of the system, but rather on the security education and training of the system users and staff.

A Virtual Machine (VM) environment has five guest Operating Systems (OS) and provides strong isolation. What MUST an administrator review to audit a user’s access to data files?

Host VM monitor audit logs

Guest OS access controls

Host VM access controls

Guest OS audit logs

Guest OS audit logs are what an administrator must review to audit a user’s access to data files in a VM environment that has five guest OS and provides strong isolation. A VM environment is a system that allows multiple virtual machines (VMs) to run on a single physical machine, each with its own OS and applications. A VM environment can provide several benefits, such as:

A guest OS is the OS that runs on a VM, which is different from the host OS that runs on the physical machine. A guest OS can have its own security controls and mechanisms, such as access controls, encryption, authentication, and audit logs. Audit logs are records that capture and store the information about the events and activities that occur within a system or a network, such as the access and usage of the data files. Audit logs can provide a reactive and detective layer of security by enabling the monitoring and analysis of the system or network behavior, and facilitating the investigation and response of the incidents.

Guest OS audit logs are what an administrator must review to audit a user’s access to data files in a VM environment that has five guest OS and provides strong isolation, because they can provide the most accurate and relevant information about the user’s actions and interactions with the data files on the VM. Guest OS audit logs can also help the administrator to identify and report any unauthorized or suspicious access or disclosure of the data files, and to recommend or implement any corrective or preventive actions.

The other options are not what an administrator must review to audit a user’s access to data files in a VM environment that has five guest OS and provides strong isolation, but rather what an administrator might review for other purposes or aspects. Host VM monitor audit logs are records that capture and store the information about the events and activities that occur on the host VM monitor, which is the software or hardware component that manages and controls the VMs on the physical machine. Host VM monitor audit logs can provide information about the performance, status, and configuration of the VMs, but they cannot provide information about the user’s access to data files on the VMs. Guest OS access controls are rules and mechanisms that regulate and restrict the access and permissions of the users and processes to the resources and services on the guest OS. Guest OS access controls can provide a proactive and preventive layer of security by enforcing the principles of least privilege, separation of duties, and need to know. However, guest OS access controls are not what an administrator must review to audit a user’s access to data files, but rather what an administrator must configure and implement to protect the data files. Host VM access controls are rules and mechanisms that regulate and restrict the access and permissions of the users and processes to the VMs on the physical machine. Host VM access controls can provide a granular and dynamic layer of security by defining and assigning the roles and permissions according to the organizational structure and policies. However, host VM access controls are not what an administrator must review to audit a user’s access to data files, but rather what an administrator must configure and implement to protect the VMs.

The BEST way to check for good security programming practices, as well as auditing for possible backdoors, is to conduct

log auditing.

code reviews.

impact assessments.

static analysis.

Code reviews are the best way to check for good security programming practices, as well as auditing for possible backdoors, in a software system. Code reviews involve examining the source code of the software for any errors, vulnerabilities, or malicious code that could compromise the security or functionality of the system. Code reviews can be performed manually by human reviewers, or automatically by tools that scan and analyze the code. The other options are not as effective as code reviews, as they either do not examine the source code directly (A and C), or only detect syntactic or semantic errors, not logical or security flaws (D). References: CISSP All-in-One Exam Guide, Eighth Edition, Chapter 8, page 463; Official (ISC)2 CISSP CBK Reference, Fifth Edition, Chapter 8, page 555.

Which security action should be taken FIRST when computer personnel are terminated from their jobs?

Remove their computer access

Require them to turn in their badge

Conduct an exit interview

Reduce their physical access level to the facility

The first security action that should be taken when computer personnel are terminated from their jobs is to remove their computer access. Computer access is the ability to log in, use, or modify the computer systems, networks, or data of the organization3. Removing computer access can prevent the terminated personnel from accessing or harming the organization’s information assets, or from stealing or leaking sensitive or confidential data. Removing computer access can also reduce the risk of insider threats, such as sabotage, fraud, or espionage. Requiring them to turn in their badge, conducting an exit interview, and reducing their physical access level to the facility are also important security actions that should be taken when computer personnel are terminated from their jobs, but they are not as urgent or critical as removing their computer access. References: 3: Official (ISC)2 CISSP CBK Reference, 5th Edition, Chapter 5, page 249.

An organization allows ping traffic into and out of their network. An attacker has installed a program on the network that uses the payload portion of the ping packet to move data into and out of the network. What type of attack has the organization experienced?

Data leakage

Unfiltered channel

Data emanation

Covert channel

The organization has experienced a covert channel attack, which is a technique of hiding or transferring data within a communication channel that is not intended for that purpose. In this case, the attacker has used the payload portion of the ping packet, which is normally used to carry diagnostic data, to move data into and out of the network. This way, the attacker can bypass the network security controls and avoid detection. Data leakage (A) is a general term for the unauthorized disclosure of sensitive or confidential data, which may or may not involve a covert channel. Unfiltered channel (B) is a term for a communication channel that does not have any security mechanisms or filters applied to it, which may allow unauthorized or malicious traffic to pass through. Data emanation © is a term for the unintentional radiation or emission of electromagnetic signals from electronic devices, which may reveal sensitive or confidential information to eavesdroppers. References: CISSP All-in-One Exam Guide, Eighth Edition, Chapter 4, page 179; Official (ISC)2 CISSP CBK Reference, Fifth Edition, Chapter 4, page 189.

The use of strong authentication, the encryption of Personally Identifiable Information (PII) on database servers, application security reviews, and the encryption of data transmitted across networks provide

data integrity.

defense in depth.

data availability.

non-repudiation.

Defense in depth is a security strategy that involves applying multiple layers of protection to a system or network to prevent or mitigate attacks. The use of strong authentication, the encryption of Personally Identifiable Information (PII) on database servers, application security reviews, and the encryption of data transmitted across networks are examples of defense in depth measures that can enhance the security of the system or network.

A, C, and D are incorrect because they are not the best terms to describe the security strategy. Data integrity is a property of data that ensures its accuracy, consistency, and validity. Data availability is a property of data that ensures its accessibility and usability. Non-repudiation is a property of data that ensures its authenticity and accountability. While these properties are important for security, they are not the same as defense in depth.

When building a data center, site location and construction factors that increase the level of vulnerability to physical threats include

hardened building construction with consideration of seismic factors.

adequate distance from and lack of access to adjacent buildings.

curved roads approaching the data center.

proximity to high crime areas of the city.

When building a data center, site location and construction factors that increase the level of vulnerability to physical threats include proximity to high crime areas of the city. This factor increases the risk of theft, vandalism, sabotage, or other malicious acts that could damage or disrupt the data center operations. The other options are factors that decrease the level of vulnerability to physical threats, as they provide protection or deterrence against natural or human-made hazards. Hardened building construction with consideration of seismic factors (A) reduces the impact of earthquakes or other natural disasters. Adequate distance from and lack of access to adjacent buildings (B) prevents unauthorized entry or fire spread from neighboring structures. Curved roads approaching the data center © slow down the speed of vehicles and make it harder for attackers to ram or bomb the data center. References: CISSP All-in-One Exam Guide, Eighth Edition, Chapter 10, page 637; Official (ISC)2 CISSP CBK Reference, Fifth Edition, Chapter 10, page 699.

Which of the following is considered best practice for preventing e-mail spoofing?

Spam filtering

Cryptographic signature

Uniform Resource Locator (URL) filtering

Reverse Domain Name Service (DNS) lookup

The best practice for preventing e-mail spoofing is to use cryptographic signatures. E-mail spoofing is a technique that involves forging the sender’s address or identity in an e-mail message, usually to trick the recipient into opening a malicious attachment, clicking on a phishing link, or disclosing sensitive information. Cryptographic signatures are digital signatures that are created by encrypting the e-mail message or a part of it with the sender’s private key, and attaching it to the e-mail message. Cryptographic signatures can be used to verify the authenticity and integrity of the sender and the message, and to prevent e-mail spoofing5 . References: 5: What is Email Spoofing? : How to Prevent Email Spoofing

Which of the following statements is TRUE of black box testing?

Only the functional specifications are known to the test planner.

Only the source code and the design documents are known to the test planner.

Only the source code and functional specifications are known to the test planner.

Only the design documents and the functional specifications are known to the test planner.

Black box testing is a method of software testing that does not require any knowledge of the internal structure or code of the software1. The test planner only knows the functional specifications, which describe what the software is supposed to do, and tests the software based on the expected inputs and outputs. Black box testing is useful for finding errors in the functionality, usability, or performance of the software, but it cannot detect errors in the code or design. White box testing, on the other hand, requires the test planner to have access to the source code and the design documents, and tests the software based on the internal logic and structure2. References: 1: CISSP All-in-One Exam Guide, Eighth Edition, Chapter 21, page 13132: CISSP For Dummies, 7th Edition, Chapter 8, page 215.

The stringency of an Information Technology (IT) security assessment will be determined by the

system's past security record.

size of the system's database.

sensitivity of the system's datA.

age of the system.

The stringency of an Information Technology (IT) security assessment will be determined by the sensitivity of the system’s data, as this reflects the level of risk and impact that a security breach could have on the organization and its stakeholders. The more sensitive the data, the more stringent the security assessment should be, as it should cover more aspects of the system, use more rigorous methods and tools, and provide more detailed and accurate results and recommendations. The system’s past security record, size of the system’s database, and age of the system are not the main factors that determine the stringency of the security assessment, as they do not directly relate to the value and importance of the data that the system processes, stores, or transmits . References: 3: Common Criteria for Information Technology Security Evaluation 4: Information technology security assessment - Wikipedia

The birthday attack is MOST effective against which one of the following cipher technologies?

Chaining block encryption

Asymmetric cryptography

Cryptographic hash

Streaming cryptography

The birthday attack is most effective against cryptographic hash, which is one of the cipher technologies. A cryptographic hash is a function that takes an input of any size and produces an output of a fixed size, called a hash or a digest, that represents the input. A cryptographic hash has several properties, such as being one-way, collision-resistant, and deterministic3. A birthday attack is a type of brute-force attack that exploits the mathematical phenomenon known as the birthday paradox, which states that in a set of randomly chosen elements, there is a high probability that some pair of elements will have the same value. A birthday attack can be used to find collisions in a cryptographic hash, which means finding two different inputs that produce the same hash. Finding collisions can compromise the integrity or the security of the hash, as it can allow an attacker to forge or modify the input without changing the hash. Chaining block encryption, asymmetric cryptography, and streaming cryptography are not as vulnerable to the birthday attack, as they are different types of encryption algorithms that use keys and ciphers to transform the input into an output. References: 3: Official (ISC)2 CISSP CBK Reference, 5th Edition, Chapter 3, page 133. : CISSP All-in-One Exam Guide, Eighth Edition, Chapter 3, page 143.

Alternate encoding such as hexadecimal representations is MOST often observed in which of the following forms of attack?

Smurf

Rootkit exploit

Denial of Service (DoS)

Cross site scripting (XSS)

Alternate encoding such as hexadecimal representations is most often observed in cross site scripting (XSS) attacks. XSS is a type of web application attack that involves injecting malicious code or scripts into a web page or a web application, usually through user input fields or parameters. The malicious code or script is then executed by the victim’s browser, and can perform various actions, such as stealing cookies, session tokens, or credentials, redirecting to malicious sites, or displaying fake content. Alternate encoding is a technique that is used by attackers to bypass input validation or filtering mechanisms, and to conceal or obfuscate the malicious code or script. Alternate encoding can use hexadecimal, decimal, octal, binary, or Unicode representations of the characters or symbols in the code or script . References: : What is Cross-Site Scripting (XSS)? : XSS Filter Evasion Cheat Sheet

What principle requires that changes to the plaintext affect many parts of the ciphertext?

Diffusion

Encapsulation

Obfuscation

Permutation

Diffusion is the principle that requires that changes to the plaintext affect many parts of the ciphertext. Diffusion is a property of a good encryption algorithm that aims to spread the influence of each plaintext bit over many ciphertext bits, so that a small change in the plaintext results in a large change in the ciphertext2. Diffusion can increase the security of the encryption by making it harder for an attacker to analyze the statistical patterns or correlations between the plaintext and the ciphertext. Encapsulation, obfuscation, and permutation are not principles that require that changes to the plaintext affect many parts of the ciphertext, as they are related to different aspects of encryption or security. References: 2: CISSP For Dummies, 7th Edition, Chapter 3, page 65.

Which of the following Disaster Recovery (DR) sites is the MOST difficult to test?

Hot site

Cold site

Warm site

Mobile site

A cold site is a backup facility with little or no hardware equipment installed. It is the most cost-effective option among the three disaster recovery sites, but it takes a lot of time to properly set it up and resume business operations. Therefore, testing a cold site is the most difficult and time-consuming task.

Which of the following is a potential risk when a program runs in privileged mode?

It may serve to create unnecessary code complexity

It may not enforce job separation duties

It may create unnecessary application hardening

It may allow malicious code to be inserted