A Snowflake Architect is designing an application and tenancy strategy for an organization where strong legal isolation rules as well as multi-tenancy are requirements.

Which approach will meet these requirements if Role-Based Access Policies (RBAC) is a viable option for isolating tenants?

Create accounts for each tenant in the Snowflake organization.

Create an object for each tenant strategy if row level security is viable for isolating tenants.

Create an object for each tenant strategy if row level security is not viable for isolating tenants.

Create a multi-tenant table strategy if row level security is not viable for isolating tenants.

In a scenario where strong legal isolation is required alongside the need for multi-tenancy, the most effective approach is to create separate accounts for each tenant within the Snowflake organization. This approach ensures complete isolation of data, resources, and management, adhering to strict legal and compliance requirements. Role-Based Access Control (RBAC) further enhances security by allowing granular control over who can access what resources within each account. This solution leverages Snowflake’s capabilities for managing multiple accounts under a single organization umbrella, ensuring that each tenant's data and operations are isolated from others.References: Snowflake documentation on multi-tenancy and account management, part of the SnowPro Advanced: Architect learning path.

Following objects can be cloned in snowflake

Permanent table

Transient table

Temporary table

External tables

Internal stages

References: : Cloning Considerations : CREATE TABLE … CLONE : CREATE EXTERNAL TABLE … CLONE : Temporary Tables : Internal Stages

A user, analyst_user has been granted the analyst_role, and is deploying a SnowSQL script to run as a background service to extract data from Snowflake.

What steps should be taken to allow the IP addresses to be accessed? (Select TWO).

ALTERROLEANALYST_ROLESETNETWORK_POLICY='ANALYST_POLICY';

ALTERUSERANALYSTJJSERSETNETWORK_POLICY='ANALYST_POLICY';

ALTERUSERANALYST_USERSETNETWORK_POLICY='10.1.1.20';

USE ROLE SECURITYADMIN;

CREATE OR REPLACE NETWORK POLICY ANALYST_POLICY ALLOWED_IP_LIST = ('10.1.1.20');

USE ROLE USERADMIN;

CREATE OR REPLACE NETWORK POLICY ANALYST_POLICY

ALLOWED_IP_LIST = ('10.1.1.20');

To ensure that an analyst_user can only access Snowflake from specific IP addresses, the following steps are required:

Options A and E mention altering roles or using the wrong role (USERADMIN typically does not manage network security settings), and option C incorrectly attempts to set a network policy directly as an IP address, which is not syntactically or functionally valid.References: Snowflake's security management documentation covering network policies and role-based access controls.

A group of Data Analysts have been granted the role analyst role. They need a Snowflake database where they can create and modify tables, views, and other objects to load with their own data. The Analysts should not have the ability to give other Snowflake users outside of their role access to this data.

How should these requirements be met?

Grant ANALYST_R0LE OWNERSHIP on the database, but make sure that ANALYST_ROLE does not have the MANAGE GRANTS privilege on the account.

Grant SYSADMIN ownership of the database, but grant the create schema privilege on the database to the ANALYST_ROLE.

Make every schema in the database a managed access schema, owned by SYSADMIN, and grant create privileges on each schema to the ANALYST_ROLE for each type of object that needs to be created.

Grant ANALYST_ROLE ownership on the database, but grant the ownership on future [object type] s in database privilege to SYSADMIN.

The requirements state that the data analysts need to be able to create and modify database objects and load data, but should not be able to manage access for users outside of their role.

Option C: By making each schema within the database a managed access schema and having them owned by SYSADMIN, the ability to grant privileges on the schema's objects is strictly controlled. Managed access schemas limit the granting of privileges to the role specified as the owner of the schema, in this case, SYSADMIN. The ANALYST_ROLE can be granted the privileges necessary to create and modify objects within these schemas, satisfying the requirement for the analysts to perform their tasks without being able to extend access beyond their role.

A data platform team creates two multi-cluster virtual warehouses with the AUTO_SUSPEND value set to NULL on one. and '0' on the other. What would be the execution behavior of these virtual warehouses?

Setting a '0' or NULL value means the warehouses will never suspend.

Setting a '0' or NULL value means the warehouses will suspend immediately.

Setting a '0' or NULL value means the warehouses will suspend after the default of 600 seconds.

Setting a '0' value means the warehouses will suspend immediately, and NULL means the warehouses will never suspend.

The AUTO_SUSPEND parameter controls the amount of time, in seconds, of inactivity after which a warehouse is automatically suspended. If the parameter is set to NULL, the warehouse never suspends. If the parameter is set to ‘0’, the warehouse suspends immediately after executing a query. Therefore, the execution behavior of the two virtual warehouses will be different depending on the AUTO_SUSPEND value. The warehouse with NULL value will keep running until it is manually suspended or the resource monitor limits are reached. The warehouse with ‘0’ value will suspend as soon as it finishes a query and release the compute resources. References:

Company A has recently acquired company B. The Snowflake deployment for company B is located in the Azure West Europe region.

As part of the integration process, an Architect has been asked to consolidate company B's sales data into company A's Snowflake account which is located in the AWS us-east-1 region.

How can this requirement be met?

Replicate the sales data from company B's Snowflake account into company A's Snowflake account using cross-region data replication within Snowflake. Configure a direct share from company B's account to company A's account.

Export the sales data from company B's Snowflake account as CSV files, and transfer the files to company A's Snowflake account. Import the data using Snowflake's data loading capabilities.

Migrate company B's Snowflake deployment to the same region as company A's Snowflake deployment, ensuring data locality. Then perform a direct database-to-database merge of the sales data.

Build a custom data pipeline using Azure Data Factory or a similar tool to extract the sales data from company B's Snowflake account. Transform the data, then load it into company A's Snowflake account.

The best way to meet the requirement of consolidating company B’s sales data into company A’s Snowflake account is to use cross-region data replication within Snowflake. This feature allows data providers to securely share data with data consumers across different regions and cloud platforms. By replicating the sales data from company B’s account in Azure West Europe region to company A’s account in AWS us-east-1 region, the data will be synchronized and available for consumption. To enable data replication, the accounts must be linked and replication must be enabled by a user with the ORGADMIN role. Then, a replication group must be created and the sales database must be added to the group. Finally, a direct share must be configured from company B’s account to company A’s account to grant access to the replicated data. This option is more efficient and secure than exporting and importing data using CSV files or migrating the entire Snowflake deployment to another region or cloud platform. It also does not require building a custom data pipeline using external tools.

References:

How does a standard virtual warehouse policy work in Snowflake?

It conserves credits by keeping running clusters fully loaded rather than starting additional clusters.

It starts only if the system estimates that there is a query load that will keep the cluster busy for at least 6 minutes.

It starts only f the system estimates that there is a query load that will keep the cluster busy for at least 2 minutes.

It prevents or minimizes queuing by starting additional clusters instead of conserving credits.

A standard virtual warehouse policy is one of the two scaling policies available for multi-cluster warehouses in Snowflake. The other policy is economic. A standard policy aims to prevent or minimize queuing by starting additional clusters as soon as the current cluster is fully loaded, regardless of the number of queries in the queue. This policy can improve query performance and concurrency, but it may also consume more credits than an economic policy, which tries to conserve credits by keeping the running clusters fully loaded before starting additional clusters. The scaling policy can be set when creating or modifying a warehouse, and it can be changed at any time.

References:

What is a characteristic of event notifications in Snowpipe?

The load history is stored In the metadata of the target table.

Notifications identify the cloud storage event and the actual data in the files.

Snowflake can process all older notifications when a paused pipe Is resumed.

When a pipe Is paused, event messages received for the pipe enter a limited retention period.

Event notifications in Snowpipe are messages sent by cloud storage providers to notify Snowflake of new or modified files in a stage. Snowpipe uses these notifications to trigger data loading from the stage to the target table. When a pipe is paused, event messages received for the pipe enter a limited retention period, which varies depending on the cloud storage provider. If the pipe is not resumed within the retention period, the event messages will be discarded and the data will not be loaded automatically. To load the data, the pipe must be resumed and the COPY command must be executed manually. This is a characteristic of event notifications in Snowpipe that distinguishes them from other options. References: Snowflake Documentation: Using Snowpipe, Snowflake Documentation: Pausing and Resuming a Pipe

In a managed access schema, what are characteristics of the roles that can manage object privileges? (Select TWO).

Users with the SYSADMIN role can grant object privileges in a managed access schema.

Users with the SECURITYADMIN role or higher, can grant object privileges in a managed access schema.

Users who are database owners can grant object privileges in a managed access schema.

Users who are schema owners can grant object privileges in a managed access schema.

Users who are object owners can grant object privileges in a managed access schema.

In a managed access schema, the privilege management is centralized with the schema owner, who has the authority to grant object privileges within the schema. Additionally, the SECURITYADMIN role has the capability to manage object grants globally, which includes within managed access schemas. Other roles, such as SYSADMIN or database owners, do not inherently have this privilege unless explicitly granted.

References: The verified answers are based on Snowflake’s official documentation, which outlines the roles and privileges associated with managed access schemas12.

A company has a table with that has corrupted data, named Data. The company wants to recover the data as it was 5 minutes ago using cloning and Time Travel.

What command will accomplish this?

CREATE CLONE TABLE Recover_Data FROM Data AT(OFFSET => -60*5);

CREATE CLONE Recover_Data FROM Data AT(OFFSET => -60*5);

CREATE TABLE Recover_Data CLONE Data AT(OFFSET => -60*5);

CREATE TABLE Recover Data CLONE Data AT(TIME => -60*5);

This is the correct command to create a clone of the table Data as it was 5 minutes ago using cloning and Time Travel. Cloning is a feature that allows creating a copy of a database, schema, table, or view without duplicating the data or metadata. Time Travel is a feature that enables accessing historical data (i.e. data that has been changed or deleted) at any point within a defined period. To create a clone of a table at a point in time in the past, the syntax is:

CREATE TABLE

The OFFSET parameter specifies the time difference in seconds from the present time. A negative value indicates a point in the past. For example, -60*5 means 5 minutes ago. Alternatively, the TIMESTAMP parameter can be used to specify an exact timestamp in the past. The clone will contain the data as it existed in the source table at the specified point in time12.

References:

What integration object should be used to place restrictions on where data may be exported?

Stage integration

Security integration

Storage integration

API integration

In Snowflake, a storage integration is used to define and configure external cloud storage that Snowflake will interact with. This includes specifying security policies for access control. One of the main features of storage integrations is the ability to set restrictions on where data may be exported. This is done by binding the storage integration to specific cloud storage locations, thereby ensuring that Snowflake can only access those locations. It helps to maintain control over the data and complies with data governance and security policies by preventing unauthorized data exports to unspecified locations.

A user named USER_01 needs access to create a materialized view on a schema EDW. STG_SCHEMA. How can this access be provided?

GRANT CREATE MATERIALIZED VIEW ON SCHEMA EDW.STG_SCHEMA TO USER USER_01;

GRANT CREATE MATERIALIZED VIEW ON DATABASE EDW TO USER USERJD1;

GRANT ROLE NEW_ROLE TO USER USER_01;

GRANT CREATE MATERIALIZED VIEW ON SCHEMA ECW.STG_SCHEKA TO NEW_ROLE;

GRANT ROLE NEW_ROLE TO USER_01;

GRANT CREATE MATERIALIZED VIEW ON EDW.STG_SCHEMA TO NEW_ROLE;

What are characteristics of Dynamic Data Masking? (Select TWO).

A masking policy that Is currently set on a table can be dropped.

A single masking policy can be applied to columns in different tables.

A masking policy can be applied to the value column of an external table.

The role that creates the masking policy will always see unmasked data In query results

A masking policy can be applied to a column with the GEOGRAPHY data type.

Dynamic Data Masking is a feature that allows masking sensitive data in query results based on the role of the user who executes the query. A masking policy is a user-defined function that specifies the masking logic and can be applied to one or more columns in one or more tables. A masking policy that is currently set on a table can be dropped using the ALTER TABLE command. A single masking policy can be applied to columns in different tables using the ALTER TABLE command with the SET MASKING POLICY clause. The other options are either incorrect or not supported by Snowflake. A masking policy cannot be applied to the value column of an external table, as external tables do not support column-level security. The role that creates the masking policy will not always see unmasked data in query results, as the masking policy can be applied to the owner role as well. A masking policy cannot be applied to a column with the GEOGRAPHY data type, as Snowflake only supports masking policies for scalar data types. References: Snowflake Documentation: Dynamic Data Masking, Snowflake Documentation: ALTER TABLE

Which of the following ingestion methods can be used to load near real-time data by using the messaging services provided by a cloud provider?

Snowflake Connector for Kafka

Snowflake streams

Snowpipe

Spark

Snowflake Connector for Kafka and Snowpipe are two ingestion methods that can be used to load near real-time data by using the messaging services provided by a cloud provider. Snowflake Connector for Kafka enables you to stream structured and semi-structured data from Apache Kafka topics into Snowflake tables. Snowpipe enables you to load data from files that are continuously added to a cloud storage location, such as Amazon S3 or Azure Blob Storage. Both methods leverage Snowflake’s micro-partitioning and columnar storage to optimize data ingestion and query performance. Snowflake streams and Spark are not ingestion methods, but rather components of the Snowflake architecture. Snowflake streams provide change data capture (CDC) functionality by tracking data changes in a table. Spark is a distributed computing framework that can be used to process large-scale data and write it to Snowflake using the Snowflake Spark Connector. References:

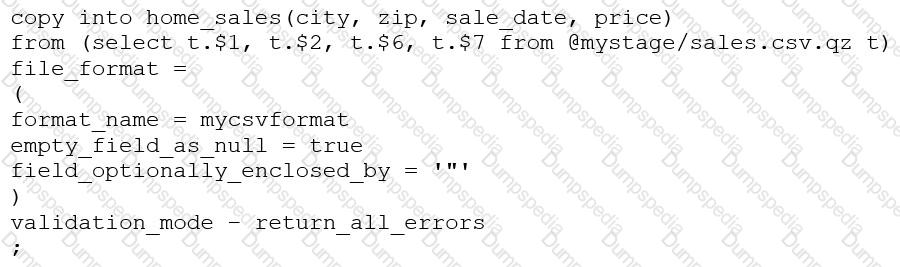

Consider the following COPY command which is loading data with CSV format into a Snowflake table from an internal stage through a data transformation query.

This command results in the following error:

SQL compilation error: invalid parameter 'validation_mode'

Assuming the syntax is correct, what is the cause of this error?

The VALIDATION_MODE parameter supports COPY statements that load data from external stages only.

The VALIDATION_MODE parameter does not support COPY statements with CSV file formats.

The VALIDATION_MODE parameter does not support COPY statements that transform data during a load.

The value return_all_errors of the option VALIDATION_MODE is causing a compilation error.

References: : COPY INTO