Which Snowflake objects track DML changes made to tables, like inserts, updates, and deletes?

Which Snowflake objects can be shared with other Snowflake accounts? (Choose three.)

When unloading data, which file format preserves the data values for floating-point number columns?

A company needs to allow some users to see Personally Identifiable Information (PII) while limiting other users from seeing the full value of the PII.

Which Snowflake feature will support this?

Which file function generates a SnowFlake-hosted URL that must be authenticated when used?

Why would a Snowflake user decide to use a materialized view instead of a regular view?

Which function should be used to insert JSON format string data inot a VARIANT field?

Which columns are part of the result set of the Snowflake LATERAL FLATTEN command? (Choose two.)

What SnowFlake database object is derived from a query specification, stored for later use, and can speed up expensive aggregation on large data sets?

Which service or feature in Snowflake is used to improve the performance of certain types of lookup and analytical queries that use an extensive set of WHERE conditions?

Which role has the ability to create a share from a shared database by default?

Why does Snowflake recommend file sizes of 100-250 MB compressed when loading data?

What is the default file size when unloading data from Snowflake using the COPY command?

A Snowflake Administrator needs to ensure that sensitive corporate data in Snowflake tables is not visible to end users, but is partially visible to functional managers.

How can this requirement be met?

Which data types are supported by Snowflake when using semi-structured data? (Choose two.)

Which snowflake objects will incur both storage and cloud compute charges? (Select TWO)

True or False: Snowpipe via REST API can only reference External Stages as source.

What is used to denote a pre-computed data set derived from a SELECT query specification and stored for later use?

What are supported file formats for unloading data from Snowflake? (Choose three.)

What are the responsibilities of Snowflake's Cloud Service layer? (Choose three.)

Which function is used to convert rows in a relational table to a single VARIANT column?

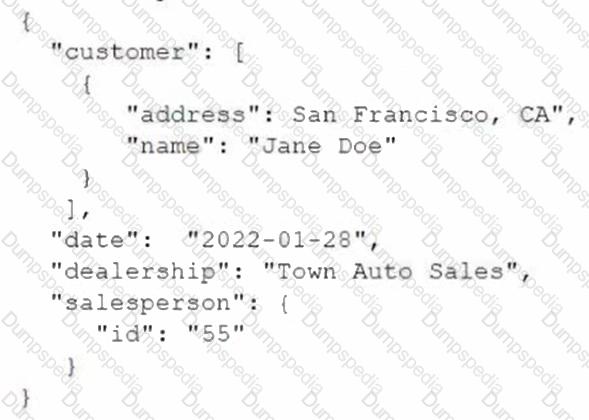

The following JSON is stored in a VARIANT column called src of the CAR_SALES table:

A user needs to extract the dealership information from the JSON.

How can this be accomplished?

In which scenarios would a user have to pay Cloud Services costs? (Select TWO).

A marketing co-worker has requested the ability to change a warehouse size on their medium virtual warehouse called mktg__WH.

Which of the following statements will accommodate this request?

While clustering a table, columns with which data types can be used as clustering keys? (Select TWO).

Which of the following features are available with the Snowflake Enterprise edition? (Choose two.)

A user is preparing to load data from an external stage

Which practice will provide the MOST efficient loading performance?

What is it called when a customer managed key is combined with a Snowflake managed key to create a composite key for encryption?

A Snowflake user wants to temporarily bypass a network policy by configuring the user object property MINS_TO_BYPASS_NETWORK_POLICY.

What should they do?

Files have been uploaded to a Snowflake internal stage. The files now need to be deleted.

Which SQL command should be used to delete the files?

What will happen if a Snowflake user increases the size of a suspended virtual warehouse?

Which Snowflake function will interpret an input string as a JSON document, and produce a VARIANT value?

A virtual warehouse's auto-suspend and auto-resume settings apply to which of the following?

What does the worksheet and database explorer feature in Snowsight allow users to do?

Which Snowflake mechanism is used to limit the number of micro-partitions scanned by a query?

Which feature is integrated to support Multi-Factor Authentication (MFA) at Snowflake?

For non-materialized views, what column in Information Schema and Account Usage identifies whether a view is secure or not?

A Snowflake user has two tables that contain numeric values and is trying to find out which values are present in both tables. Which set operator should be used?

Which query profile statistics help determine if efficient pruning is occurring? (Choose two.)

Which user object property requires contacting Snowflake Support in order to set a value for it?

User INQUISITIVE_PERSON has been granted the role DATA_SCIENCE. The role DATA_SCIENCE has privileges OWNERSHIP on the schema MARKETING of the database ANALYTICS_DW.

Which command will show all privileges granted to that schema?

Which privilege is required for a role to be able to resume a suspended warehouse if auto-resume is not enabled?

Which of the following describes how multiple Snowflake accounts in a single organization relate to various cloud providers?

User-level network policies can be created by which of the following roles? (Select TWO).

Which of the following conditions must be met in order to return results from the results cache? (Select TWO).

Which of the following are handled by the cloud services layer of the Snowflake architecture? (Choose two.)

How does Snowflake recommend handling the bulk loading of data batches from files already available in cloud storage?

Which Snowflake object can be accessed in he FROM clause of a query, returning a set of rows having one or more columns?

What file formats does Snowflake support for loading semi-structured data? (Choose three.)

Which Snowflake feature will allow small volumes of data to continuously load into Snowflake and will incrementally make the data available for analysis?

What statistical information in a Query Profile indicates that the query is too large to fit in memory? (Select TWO).

What effect does WAIT_FOR_COMPLETION = TRUE have when running an ALTER WAREHOUSE command and changing the warehouse size?

A Snowflake user has been granted the create data EXCHANGE listing privilege with their role.

Which tasks can this user now perform on the Data Exchange? (Select TWO).

Which view can be used to determine if a table has frequent row updates or deletes?

How does a Snowflake user extract the URL of a directory table on an external stage for further transformation?

Which operations are handled in the Cloud Services layer of Snowflake? (Select TWO).

What service is provided as an integrated Snowflake feature to enhance Multi-Factor Authentication (MFA) support?

A company needs to read multiple terabytes of data for an initial load as part of a Snowflake migration. The company can control the number and size of CSV extract files.

How does Snowflake recommend maximizing the load performance?

For the ALLOWED VALUES tag property, what is the MAXIMUM number of possible string values for a single tag?

How can a data provider ensure that a data consumer is going to have access to the required objects?

Which of the following is a valid source for an external stage when the Snowflake account is located on Microsoft Azure?

Which of the following Snowflake features provide continuous data protection automatically? (Select TWO).

During periods of warehouse contention which parameter controls the maximum length of time a warehouse will hold a query for processing?

A user has 10 files in a stage containing new customer data. The ingest operation completes with no errors, using the following command:

COPY INTO my__table FROM @my__stage;

The next day the user adds 10 files to the stage so that now the stage contains a mixture of new customer data and updates to the previous data. The user did not remove the 10 original files.

If the user runs the same copy into command what will happen?

True or False: It is possible for a user to run a query against the query result cache without requiring an active Warehouse.

Which of the following compute resources or features are managed by Snowflake? (Select TWO).

The Information Schema and Account Usage Share provide storage information for which of the following objects? (Choose three.)

A user needs to create a materialized view in the schema MYDB.MYSCHEMA.

Which statements will provide this access?

Which semi-structured file formats are supported when unloading data from a table? (Select TWO).

When unloading to a stage, which of the following is a recommended practice or approach?

Which Snowflake object enables loading data from files as soon as they are available in a cloud storage location?

How should a virtual warehouse be configured if a user wants to ensure that additional multi-clusters are resumed with no delay?

In an auto-scaling multi-cluster virtual warehouse with the setting SCALING_POLICY = ECONOMY enabled, when is another cluster started?

What happens to the objects in a reader account when the DROP MANAGED ACCOUNT command is executed?

What objects in Snowflake are supported by Dynamic Data Masking? (Select TWO).'

A user with which privileges can create or manage other users in a Snowflake account? (Select TWO).

Which metadata table will store the storage utilization information even for dropped tables?

What function can be used with the recursive argument to return a list of distinct key names in all nested elements in an object?

How can a Snowflake user validate data that is unloaded using the COPY INTO

Which Snowflake feature provides increased login security for users connecting to Snowflake that is powered by Duo Security service?

Which Snowflake database object can be used to track data changes made to table data?

Which VALIDATION_MODE value will return the errors across the files specified in a COPY command, including files that were partially loaded during an earlier load?

Which commands are restricted in owner's rights stored procedures? (Select TWO).

What is the purpose of the STRIP NULL_VALUES file format option when loading semi-structured data files into Snowflake?

Which statements describe benefits of Snowflake's separation of compute and storage? (Select TWO).

Who can activate and enforce a network policy for all users in a Snowflake account? (Select TWO).

A Snowflake account has activated federated authentication.

What will occur when a user with a password that was defined by Snowflake attempts to log in to Snowflake?

How can a Snowflake administrator determine which user has accessed a database object that contains sensitive information?

Which parameter can be set at the account level to set the minimum number of days for which Snowflake retains historical data in Time Travel?

What metadata does Snowflake store for rows in micro-partitions? (Select TWO).

When using the ALLOW CLIENT_MFA_CACHING parameter, how long is a cached Multi-Factor Authentication (MFA) token valid for?

Which Snowflake command can be used to unload the result of a query to a single file?

Which data types can be used in Snowflake to store semi-structured data? (Select TWO)

How does the Access_History view enhance overall data governance pertaining to read and write operations? (Select TWO).

What do temporary and transient tables have m common in Snowflake? (Select TWO).

Given the statement template below, which database objects can be added to a share?(Select TWO).

GRANT

Based on Snowflake recommendations, when creating a hierarchy of custom roles, the top-most custom role should be assigned to which role?

Which statements reflect valid commands when using secondary roles? (Select TWO).

Which table function should be used to view details on a Directed Acyclic Graphic (DAG) run that is presently scheduled or is executing?

What does Snowflake recommend a user do if they need to connect to Snowflake with a tool or technology mat is not listed in Snowflake partner ecosystem?

Which Snowflake feature records changes mace to a table so actions can be taken using that change data capture?

Which function returns an integer between 0 and 100 when used to calculate the similarity of two strings?

Which function can be used with the copy into

Which command is used to lake away staged files from a Snowflake stage after a successful data ingestion?

Which file function provides a URL with access to a file on a stage without the need for authentication and authorization?

What are potential impacts of storing non-native values like dates and timestamps in a variant column in Snowflake?

What is a non-configurable feature that provides historical data that Snowflake may recover during a 7-day period?